Introduction

This guide explains how A/B tests are tracked and analyzed in Mapp Intelligence.

It builds on the concepts described in: A/B Testing (Recommendation Performance)

Tracking Requirements

To evaluate A/B tests reliably, tracking must include the following data:

Variant Information

Test name

Assigned variant

This information must be present in all relevant tracking events.

User Interactions

Page Impressions (page views)

Product views

Add-to-basket actions

Orders

Recommendation Interactions (if applicable)

Clicks on recommended products

Interaction with recommendation elements

Revenue Data

Orders and revenue

Product-level information (if available)

Attribution

A/B test analysis depends on how interactions are linked to downstream conversions.

With recommendation tracking

Recommendation interactions (such as clicks) are recorded

These interactions can be linked to product views, basket actions, and orders

This enables direct attribution from recommendation to purchase

Without recommendation tracking

Only session-based analysis is possible

Conversions can be analyzed per variant

Direct attribution to specific recommendation interactions is not available

Key Metrics

Evaluate each variant using consistent metrics:

Conversion Rate (CR)

Revenue per Visit (RPV)

Average Order Value (AOV)

Units per Transaction (UPT)

Analysis in Mapp Intelligence

Use the following analysis approaches to compare variants.

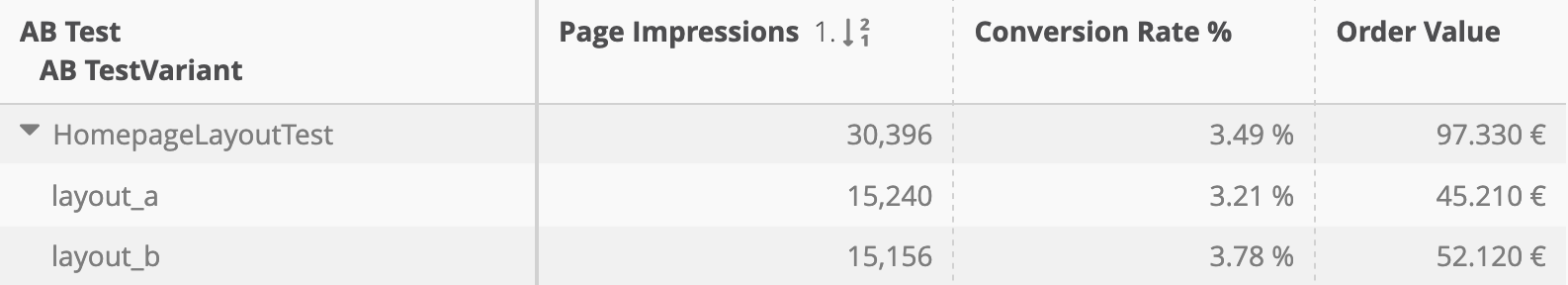

Variant Comparison

Compare overall performance:

Page Impressions

Conversion Rate

Order Value

Recommendation Performance

Analyze recommendation-specific metrics:

Clicks

Click-through rate (CTR)

Revenue (if attribution is available)

Process Analysis (conversion funnel)

Analyze the conversion process:

Product view → Add to basket → Purchase

Segment by variant to identify differences in user behavior.

Device Analysis

Compare performance across device types to identify inconsistencies or tracking issues.

Validation Checks

Before interpreting results, validate data quality:

Variant distribution is as expected (for example, equal split)

Users remain in the same variant within a session

Tracking is consistent across all variants

All relevant events include variant information